AI for the frontline must be validated, not just deployed

Oleh Resaro

Since AI is already being deployed in mission-critical environments, the validation approaches to ensure it can be trusted must catch up, according to speakers at the Milipol TechX event in Singapore.

-1778815694759.jpg)

The Strategic Partnership for Innovation (SPI) agreement between Singapore's Home Team Science and Technology Agency (HTX) and Resaro marks a shift in how AI assurance in mission-critical environments is approached: from ad hoc to structured, from explanatory to scalable, from principle to practice. Image: Resaro.

When artificial intelligence (AI) makes a mistake in a high-stakes environment, who owns it?

This was a question posed at the panel session titled ‘AI Transparency and Explainability: Can We Trust the Black Box?’ at Milipol TechX held from 28 to 30 April in Singapore.

The event gathered defence experts, cybersecurity professionals, and technology partners in a series of sessions that explored ideas and strategies to advance public safety.

Singapore Civil Defence Force, Future Technology and Public Safety, Deputy Commissioner, Daniel Seet emphasised that accountability is non-delegable.

He underlined that the Home Team cannot outsource responsibility to an algorithm, as the public expects them to own the outcomes.

"Standing behind our actions is both a privilege of leadership and a sacred contract with those we serve," Seet said.

Speaking at the panel, Resaro’s Co-Chief Executive Officer, April Chin, noted that today, the operational decision-maker is the one who must own the decision they make with AI.

But the only way operational decision-makers can own that accountability is if they have confidence in the AI they’re relying on.

Confidence in complex environments, such as government, defence and public safety, cannot be assumed; it must be built through structured and systematic assurance.

That is why assurance stands as the foundation on which responsible AI deployment rests.

AI in the middle of the threat environment

With digitalisation, the security landscape today looks different from a decade ago.

Security threats are more complex than before, and AI is being used both by cyber-attackers and defenders.

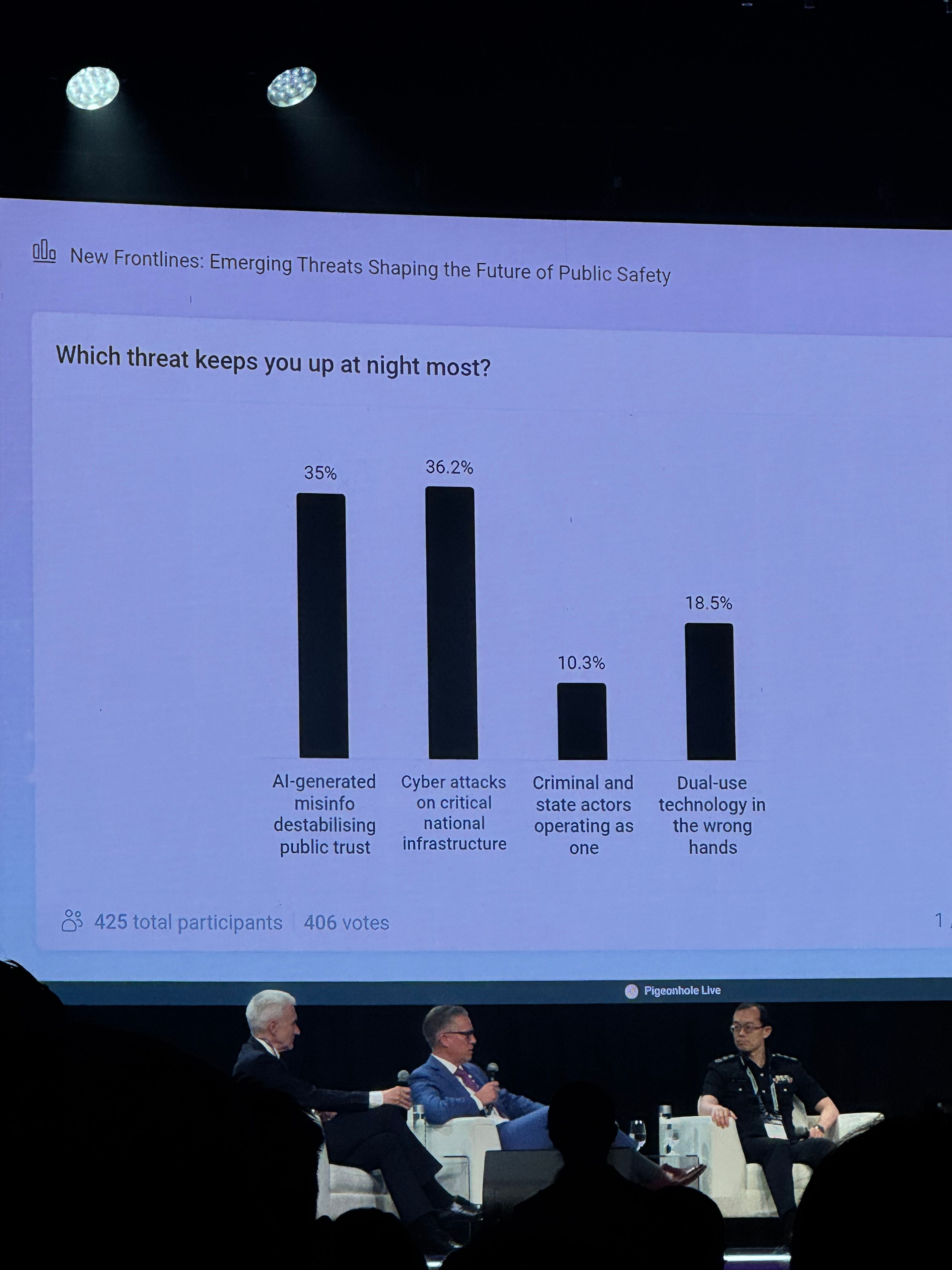

This was one of the core discussion points at another panel session titled ‘New Frontlines: Emerging Threats Shaping the Future of Public Safety’.

The rise of AI-generated synthetic media was an example of this double-edged reality, as explained by USA’s Federal Bureau of Investigation (FBI), Operational Technology Division’s Deputy Assistant Director, Karl Robert Schumann.

He noted that misinformation powered by deepfakes is moving from being a social nuisance to a national security threat, jeopardising citizen trust.

These threats could engineer election campaigns that suppress voter turnout, such as manipulating candidate's words.

Losing the trust and faith of the electorate was as consequential as an attack on a power grid, noted Schumann.

The gap between governance and the ground

Translating governance into engineering practice was a salient challenge.

Resaro's Chin noted that converting a governance framework into a concrete, measurable construct remained a challenge since concepts like transparency and explainability meant different things to different people.

“We have to bring governance, performance and risk expectations into the engineering process. We have to equip them with the knowledge and tools to understand the decision boundaries of an AI system, what it is optimised for and the full extent of its risk profile, especially the long tail risk where the AI fails silently or suddenly.

“When we talk about concepts such as explainability or transparency, ultimately what we want is better confidence in the way AI is being used,” said Chin.

What “good” actually looks like

The pace and urgency of AI adoption in public safety and security continues to accelerate, along with the complexity of what rigorous assurance requires.

For the public sector to keep pace, speakers noted that the sector was increasingly moving to strategic partnerships to enhance public safety efforts.

“We [government] need to actually connect more with the private sector, to really enhance security of our infrastructure,” said USA’s Department of Homeland Security, Deputy Under Secretary for Science and Technology, Julie Brewer.

In Singapore, the signing of a Strategic Partnership for Innovation (SPI) agreement between Singapore's Home Team Science and Technology Agency (HTX) and Resaro marked a shift in how this AI assurance in mission-critical environments was approached: from ad hoc to structured, from explanatory to scalable, from principle to practice.

The SPI will provide HTX with access to cutting-edge developments in embodied AI, autonomous systems, and AI assurance frameworks.

The partnership builds on existing efforts upon working together since 2022 to develop novel approaches to AI assurance that can keep pace with the deployments of AI in critical infrastructure environments.

For AI systems supporting Home Team operations, that meant ensuring reliable performance, transparency, and operational readiness under real world constraints before deployment.

The sensitivity of mission-critical operations meant that data must be contained within secure perimeters, systems cannot depend on external connectivity, and assurance must be embedded into the full lifecycle of AI systems.

Resaro’s Approved Intelligence Platform (AIP), provides the air-gapped infrastructure for engineers to test and validate AI systems across performance, safety, and security dimensions, and generate results that are traceable and auditable. This enables structured deployment evidence that leadership needs to make informed decisions.

As high-stakes environments continue to deploy AI systems, this rigorous and structured assurance infrastructure remains critical to ensure that modern national critical infrastructure is secure, reliable, and trustworthy.