Error 404: Safe digital spaces for women not found

Oleh Bismah NayyerLaraib FarhatGulraiz Iqbal

Digital spaces have become bad places for women and marginalised communities due to the absence of human oversight in an industry that bets on chaos since chaos attracts clicks.

A collage of images produced by Midjourney when it was given a text prompt by the authors to imagine a world where women and female activists confidently use digital spaces, free from cyber misogyny. Image: Bismah Nayyer.

The internet was once seen as a revolutionary tool for empowerment, a place where marginalised voices could be amplified, knowledge knew no borders, and activism could thrive.

But today, digital spaces have become the worst places to be, particularly for women, LGBTQI+ activists, and other marginalised communities. The rise of deepfake pornography, targeted harassment, and misinformation campaigns has made these platforms increasingly hostile.

For example, major tech platforms are shadow-banning women's health information while propagating manosphere content to men, LinkedIn censoring women voices not hiding gender biases anymore, Meta scrapping its fact-checking programme, and the exodus of organisations from X (formerly Twitter) due to rising hate speech under Elon Musk’s ownership, only further illustrating how digital safety is eroding.

The erosion of these safety nets has escalated gender-based violence, which is known as technology-facilitated gender-based violence (TFGBV).

The UN Women 2023 TFVAW Report of the Foundational Meeting of the Expert Group defined TFGBV as any act of violence directed at someone because of their gender, where the harm is committed, enabled, intensified, or spread using digital technologies.

MidJourney crashed on women’s digital safety

To explore how artificial intelligence (AI) perceives safe digital spaces for women activists, we used MidJourney, to generate images of women and girls engaging online in an environment free from cyber misogyny.

We chose MidJourney, a Generative AI (GenAI) tool text-to-image platform, because it is more responsive to subtle prompt changes, produces less repetitive outputs, and offers a useful comparison outside the OpenAI ecosystem.

Yet instead of depicting everyday digital activism, the tool defaulted to stereotypical visuals of women protesting or wielding swords.

We crafted five refined prompts with ChatGPT to shift the narrative, envisioning scenarios where women participated in livestream advocacy, online forums, viral discussions, and digital artivism.

MidJourney successfully rendered these, but exclusively with all-women spaces, failing to depict mixed-gender environments where women feel safe.

The most revealing moment came with our fifth and final prompt, which described a futuristic tech hub where women activists develop ethical AI and social media algorithms to eliminate cyber misogyny. The prompt reads:

"A futuristic tech hub where women activists work on coding ethical AI and social media algorithms to eliminate cyber misogyny. Large, transparent holographic screens display phrases like 'Safe Digital Spaces' and 'Inclusive Algorithms.' The activists, wearing modern tech-inspired attire, are focused and determined, building an online world free from gender-based abuse. The scene is sleek, neon-lit, and visually striking, blending technology with activism. Ultra-HD, cyberpunk aesthetic with a hopeful tone."

MidJourney flagged it for violating community guidelines.

The inability of AI to envision a digital world in which women participate in tech leadership alongside men highlights deep biases in AI design and digital platforms.

This raises urgent questions about who shapes the future of online spaces, and whether technology is reinforcing exclusion.

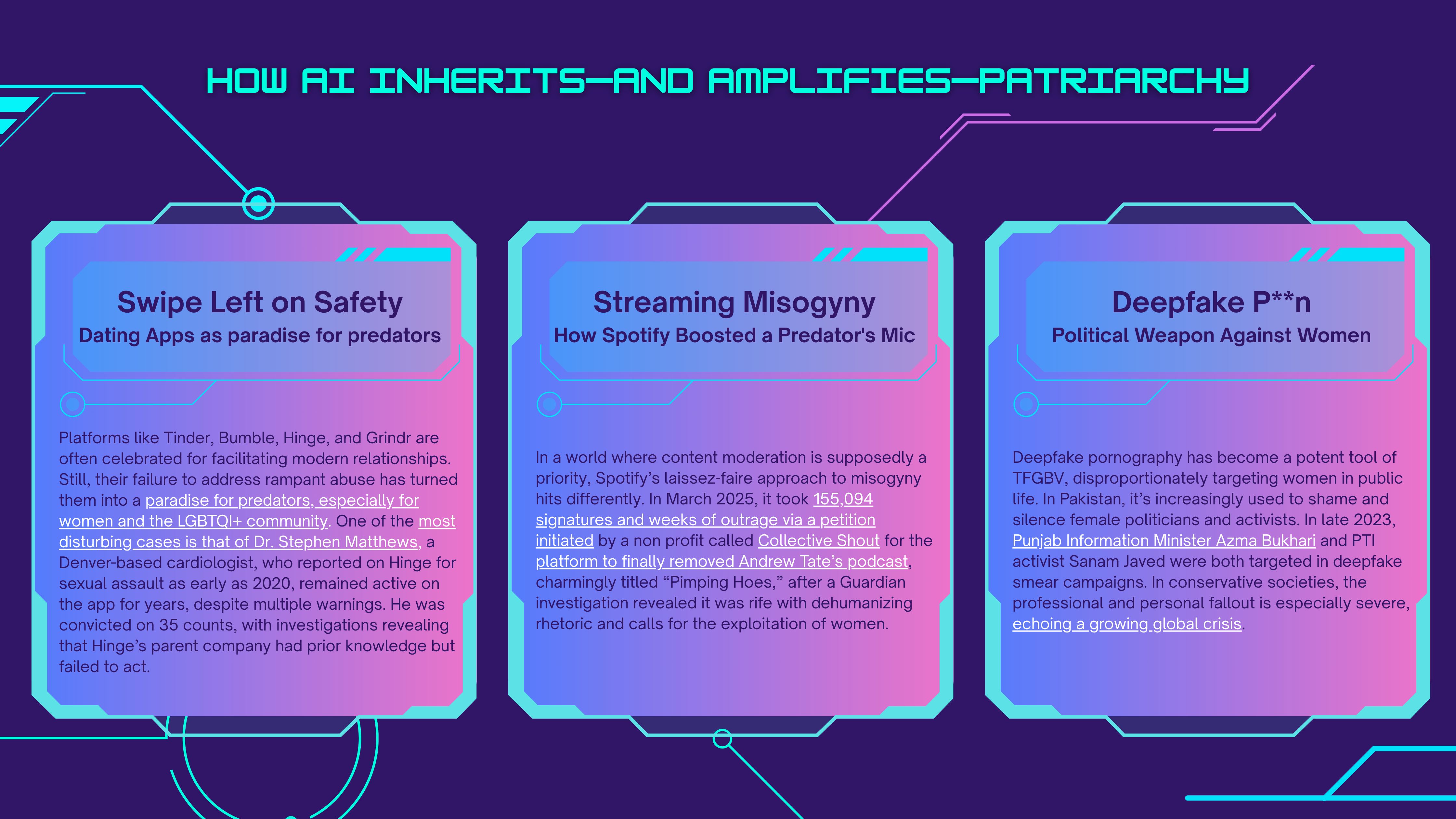

Code-biased: How AI inherits, and amplifies patriarchy

Our Midjourney experiment revealed something AI rarely shows so clearly: that digital spaces designed without women in mind struggle to be safe.

But this isn’t limited to just one image generator. Across platforms, AI and digital tech reflect the world’s oldest hierarchies and spread them quickly.

The harm is not always loud. Sometimes it looks like suppression, invisibility, or reach quietly cut off.

A 2025 report by the Center for Intimacy Justice (CIJ) found that major platforms, including Meta, Google, Amazon, and TikTok, have systematically suppressed women’s health and sexual and reproductive (SRHR) health information while allowing comparable content for men.

The study documented ad rejections, age-gating, content removals, and listing bans affecting topics such as menstruation, menopause, and endometriosis.

Platforms are not only failing to protect women online; they are also restricting access to the information women need to protect themselves.

Even professional platforms reproduce this bias.

Analysis and commentary on LinkedIn’s evolving algorithm suggest that content associated with women, including posts on sexism, workplace culture, empathy, and representation, can receive lower visibility in systems that reward more traditionally male-coded professional language and topics.

The effect is subtle but powerful: reduced reach, lower credibility, and fewer opportunities.

Campaigners have also raised concerns about the shadow-banning of women’s health content, framing it not just as a moderation issue but as a public health issue.

It’s technology-enabled gender-based violence (TFGBV) on a large scale.

Here are three real-world examples showing how digital systems, if left unchecked, become dangerous intentionally.

Fixing the glitch: what must change now

What platforms must confront: violence began before the chatbot’s flirtation or the algorithm’s indifference. It lies in the power dynamics shaping these systems. The absence of human oversight indicates an industry betting on chaos since chaos attracts clicks.

As architects of public space, platform designers extract attention rather than ensure dignity, enabling violence to become structural.

That treats the safety of girls, queer youth, and gender minorities not as niche features, but as foundational design principles.

These systems weren’t broken; they worked exactly as they were built to. Thus, platforms must implement two key reforms to mitigate chaos:

-

Reinstate independent fact-checking as core infrastructure: Meta’s shift to “community notes” instead of professional fact-checking has sparked backlash. Platforms must prioritise truth-telling and re-establish partnerships with credible verification bodies, s well as prioritise human moderation for sensitive and harmful content.

-

Mandate safety protocols across dating and AI chatbots: Matthew's Tinder and Hinge shows that reporting systems are often ignored and broken. Platforms must implement transparent accountability, conduct regular safety audits, and legally require timely action on user reports. Similarly, digital safety requires structural inclusion. Platforms must ensure women, girls, and LGBTQI+ people are meaningfully represented in programming teams, ethics committees, AI governance boards, and decision-making spaces that shape digital infrastructure.

The government’s role

Governments possess both the authority and the obligation to create safer digital environments through legislation, regulation, and rights-based protections. Here’s how they can act now to prevent harm and ensure accountability:

-

Enact and enforce stronger digital safety laws: Governments must pass robust legislation that criminalises deepfake pornography, cyberstalking, and technology-facilitated gender-based violence. For example, Pakistan’s PECA Amendment Act 2025 introduces legal penalties for online harassment and deepfake content, offering a legislative framework to hold perpetrators accountable.

-

Regulate platforms and push for AI accountability: Regulators must demand transparency from tech companies on how content is moderated, how dating apps handle abuse reports, and how AI systems are trained and deployed. The UK’s recent launch of an AI technique to block online child grooming in real time shows how governments can drive innovation that prioritises user safety over engagement metrics.

How men can ensure safe digital spaces

There is a quiet comfort in anonymity, especially when one is not the target.

Digital safety feels natural for many men because the system was never built to restrain them. But that comfort is privilege, not neutrality.

Ignoring its cost means being complicit. Here are three ways men can help create safer digital spaces for women and gender minorities:

-

Interrupt harm, even when it’s familiar: Men must call out harassment, misogyny, and harmful content, especially from friends. Silence isn’t neutrality; it’s complicity. Refusing to engage with or endorse toxic content is essential for digital accountability.

-

Push for structural change: Men must support systemic reforms: advocate for ethical AI, transparent safety audilicies that do not treat harm to women and LGBTQI+ individuals as collateral damage. True accountability lies in personal choices and demanding safer infrastructures for all.

Digital safety must begin at the root

Power structures originate from mundane, not violence or spectacle, but from design. Digital safety involves redesigning power rather than merely removing harm.

Platforms like MidJourney's fail to envision a safe mixed-gender digital future, highlighting deeper exclusions in code, culture, and leadership. This moment requires transformation, not just moderation.

That exclusion is not only symbolic. It has material consequences.

The UK Parliament’s Women and Equalities Committee recently dedicated a section of its menstrual health report to the shadowbanning of women’s health content on social media, warning that suppressed content restricts women’s and girls’ access to information about their own bodies and calling on the government to address the problem in the Women’s Health Strategy.

In other words, algorithmic invisibility is not just a platform issue; it is a public health issue.

As stated in CEDAW General Recommendation No. 35, technology-facilitated gender-based violence must be understood as a human rights violation.

The Generation Equality Forum has likewise pushed for gender equality to shape digital transformation, not merely follow behind it. And WHO’s guidance on AI and SRHR makes clear that digital tools must be designed to uphold dignity, equity, and rights rather than deepen existing exclusion.

If safety is to be foundational rather than cosmetic, then platforms, policymakers, and users must confront the patriarchal logic built into our digital systems. Justice cannot be retrofitted. It must be written from the start.

--------------------

Bismah Nayyer is a public health professional with expertise in Gender Advocacy and Policy. Since 2020, she has been specifically working on women’s rights, including menstrual health, contributing to menstrual justice efforts from global to local. Her current work focuses on the impact of AI on sexual and reproductive health and rights (SRHR), alongside consulting for UN agencies and public health organisations and serving on gender-focused advisory boards.

Laraib Farhat is a technology policy analyst with her primary focus being on fostering governmental partnerships to address regulatory challenges faced by the IT sector in Pakistan.

Gulraiz Iqbal is a policy researcher and International Relations analyst specialising in digital governance, AI ethics, and tech diplomacy.