Beyond copyright: Why Asia Pacific governments must govern AI training data as public infrastructure

Oleh Mika (Jaeyun) Noh

Countries need mechanisms enabling collective governance of cultural training data, treating it as public infrastructure requiring stewardship, not private property awaiting extraction.

-1774848199898.jpg)

Cultural policy is becoming a technology policy, whether civil servants recognise it or not. Image: Canva

Last year, South Korea's National Assembly faced an unexpected question during deliberations on the Art Promotion Act.

If artificial intelligence (AI) systems, trained on millions of Korean artworks, can now generate traditional paintings indistinguishable from human-created works, who owns the resulting output?

More urgently, who should govern the training process itself? The answer matters far beyond the arts.

How governments handle cultural training data today will establish precedents for governing AI across every sector where institutional knowledge matters like healthcare, education, public administration and scientific research.

Cultural policy is becoming a technology policy, whether civil servants recognise it or not.

The real sovereignty question: Who shapes AI's knowledge base?

When OpenAI, Google, and other predominantly US companies train their frontier models, they didn't just scrape English-language websites.

They extract cultural knowledge from across the globe, like Korean traditional painting techniques, Indonesian batik patterns, Thai classical dance forms, Japanese calligraphy, to name a few, without permission, compensation, or even acknowledgment.

The LAION-5B dataset powering Stable Diffusion contains billions of images representing centuries of non-Western cultural production. These aren't just pretty pictures.

Now they are training models that anyone can use to generate "new" works in seconds by paying US$20 (S$25.54) a month.

This creates a form of technological dependency distinct from infrastructure reliance.

When AI systems, trained predominantly on Western datasets, become the default tools for creation and curation worldwide, they don't just process culture—they reshape what kinds of culture get produced, recognised, and valued.

The sovereignty question isn't just who owns the compute, but who shapes the knowledge structures embedded in AI systems.

Why traditional regulatory approaches fall short

Most governments are approaching the governance of AI-generated outputs through copyright frameworks designed for industrial-era reproduction, not algorithmic learning.

While copyright addresses individual rights to specific works, it cannot govern collective cultural knowledge or address power asymmetries when millions of individual creators negotiate separately with trillion-dollar platforms.

Individual consent mechanisms fail at scale.

Artists can use technical tools like Glaze to poison AI scrapers, but these are bandaids that sophisticated systems will route around.

Meanwhile, museums and archives face impossible choices: digitise collections for public access, making them vulnerable to scraping, or keeping materials offline, defeating preservation goals.

The policy challenge requires institutional innovation, not just regulatory adjustment.

Asia Pacific countries need mechanisms enabling collective governance of cultural training data, treating it as public infrastructure requiring stewardship, not private property awaiting extraction.

Cultural data trusts: A model for collective governance

Data trusts offer one promising approach.

Rather than individual artists negotiating separately with AI companies, trusts pool data rights and exercise them collectively through fiduciary structures. This isn't theoretical, experiments are underway across multiple contexts.

The Serpentine Galleries' Choral Data Trust in London demonstrated proof-of-concept: 15 UK choirs collectively governed how their performance recordings could be used in AI voice synthesis.

Through deliberative polling, members established licensing terms, prohibited uses, and revenue-sharing mechanisms. When AI developers wanted training data, they negotiated with the trust, not individual singers.

South Korea's 2024 Art Promotion Act establishes legal infrastructure enabling similar models: authentication systems for cultural works, mandatory provenance tracking, and collective rights management provisions.

We are now organising a pilot trust with 50 Korean artists to test whether this scales beyond experimental contexts.

Indigenous communities worldwide are leading parallel innovations.

The Local Contexts initiative developed Traditional Knowledge Labels asserting community governance over digitised cultural materials.

These labels don't rely on Western intellectual property frameworks, they create parallel governance structures based on Indigenous data sovereignty principles.

What governments should do now

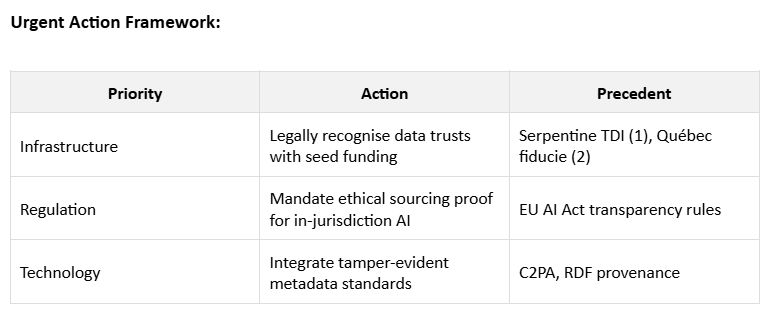

Asia Pacific countries can shape how those systems access and use cultural knowledge from their jurisdictions. Three policy levers matter most:

First, enable collective governance infrastructure. Governments must pivot from passive regulation to proactive stewardship. By adapting existing collective management models, such as performance rights societies or agricultural cooperatives, into Cultural Data Trusts, we can consolidate fragmented creative sectors into a formidable negotiating bloc.

This creates a "sovereign clearinghouse" for cultural data: a single, high-trust interface that ensures AI developers pay for quality while creators retain their dignity.

We should not wait for a perfect global model; we need regulatory sandboxes today to iterate toward a new regional standard for data sovereignty.

Second, make ethical data sourcing a competitive advantage. Governments should require AI systems operating in-jurisdiction to demonstrate data hygiene, meaning verifiable consent, fair compensation, and respect for cultural protocols.

This is the "Brussels Effect" adapted for the Asia-Pacific: by setting high ethical standards, we turn our jurisdictions into "safe harbors" for responsible innovation.

Mandating that AI models be legally clean does not just protect creators; it de-risks the entire national AI ecosystem, making our digital markets more stable, transparent, and attractive to high-value global investment.

Third, invest in technical infrastructure. Governments must fund the development of provenance and authentication systems to track the lifecycle of cultural assets. Data is the new cultural infrastructure.

We must invest in "Digital DNA", which are metadata, watermarking, and blockchain-based provenance, that allows a brushstroke or a melody to be traced back to its origin. If we cannot track our data, we cannot tax, protect, or govern it.

Building this "transparency stack” is a prerequisite for any nation that wishes to remain a sovereign actor in the age of generative intelligence. Support integration with content authenticity initiative and similar efforts establishing tamper-evident metadata.

Building institutional capacity for AI governance

As AI converges with specialised domains like medicine and law, we risk outsourcing a nation’s cognitive capital to unaccountable, private algorithms.

When a profession’s core intelligence is mediated by third-party AI, the institution loses the ability to verify its own data lineage or stand behind its expertise.

This is a challenge to the "cognitive sovereignty" of our most vital social institutions.

Cultural production is the ideal regulatory lab for this broader crisis. Unlike healthcare or finance, culture allows for rapid, visible experimentation with Data Trusts and collective rights.

The frameworks we build here, by it tracking provenance and ensuring community consent,

will provide the legal and technical blueprints for the entire knowledge economy.

The "third way" for the Asia-Pacific

Asia-Pacific nations should not merely replicate foreign models of unfettered extraction or state surveillance. Instead, we can pioneer a third way based on collective stewardship.

AI governance is not a "cost" of regulation; it is a strategy for development. A society that merely adopts technology is a customer; a society that shapes technology is a sovereign.

The window for action

Delay is an implicit endorsement of the status quo. While committees deliberate, millions of cultural assets are being distilled into proprietary models.

If we do not establish "clearinghouses" for our data now, the market will consolidate around extractive patterns that we can neither influence nor reverse.

Sovereignty in the AI age is the "power to participate." It is the ability to walk into a negotiation and declare our knowledge a managed asset, not a raw commodity.

By governing the data today, we ensure our cultural and intellectual traditions remain active participants in the digital future, rather than museum pieces in a proprietary cloud.

--------------------------------------------------------

The author is a cultural policy strategist and researcher working at the intersection of artificial intelligence, cultural governance, and digital public infrastructure. She has worked across legislative and policy environments with the National Assembly of the Republic of Korea, the Ministry of Culture, Sports and Tourism, and the Seoul Metropolitan Council, contributing to policy discussions on cultural regulation, creative economies, and digital transformation.